The European Commission launched its age verification app on April 15, 2026, and declared it met “the highest privacy standards in the world.” By the afternoon of April 16, a security consultant had bypassed the entire authentication system using nothing more than a text editor and two minutes of his time.

This is not a story about a minor software bug. This is a story about an architectural philosophy that should never have made it past a junior developer’s code review, shipped under a €4 million government contract, to 500 million European citizens, as the foundational layer of what Brussels openly plans to expand into a continent-wide digital identity infrastructure.

At CADChain, we build cryptographic IP protection systems for 3D design data. Our threat model assumes adversaries who are patient, technically skilled, and highly motivated. The EU age verification app’s threat model appears to have assumed adversaries who either do not exist or are very polite. Let me walk you through every vulnerability, connect it to the pattern of recent EU data breaches that should have informed this design, and explain why a well-run startup with a fraction of this budget would have built something materially more secure.

And then let me ask the question none of the press releases are asking: if you only need to verify someone’s age once, why does the credential expire?

TL;DR: The EU Age Verification App Was Hacked in 2 Minutes

The EU age verification app stores authentication controls, including PIN encryption, rate-limiting counters, and biometric bypass flags, in a plain-text, user-editable local configuration file. Security consultant Paul Moore bypassed the full authentication system in under two minutes by deleting two values and restarting the app. A separate March 2026 analysis found the issuer component cannot verify that passport verification actually occurred on the device, meaning the entire trust chain is unverifiable. These are not bugs. They are design decisions that any startup security review would have flagged in a first pass. The deeper question is what the credential expiry dates, verification limits, and EUDI Wallet integration roadmap say about what this infrastructure is actually being built to do.

The Vulnerability Breakdown: What the Code Actually Does Wrong

Let’s go layer by layer, from the surface-level PIN bypass down to the architectural trust failure.

Layer 1: The shared_prefs Storage Model

When a user sets up the EU age verification app, they create a PIN. The app encrypts this PIN and stores it in a directory called shared_prefs on the Android device. This is the first failure.

shared_prefs, Android’s SharedPreferences system, stores data as XML in the app’s internal storage directory. On a non-rooted device with standard permissions, this file is readable only by the app itself. On a rooted device, or any device where the app’s data directory is accessible, this file is trivially readable and editable by any process with the right permissions.

The security model of shared_prefs is isolation by permission, not cryptographic protection. Any competent Android developer knows this. The correct approach for sensitive authentication data on Android is the Android Keystore system, which stores cryptographic keys in hardware-backed secure storage tied to the device’s Trusted Execution Environment. The keys never leave the secure enclave. They cannot be extracted or edited regardless of whether the device is rooted. Multiple developers responding to Paul Moore’s thread on X asked the same question: “Why did they not use the secure enclave?” It is a reasonable question. The secure enclave has been standard on Android devices since API level 18 and on iOS since the iPhone 5S. This is not an obscure feature.

Layer 2: The PIN Encryption Is Decorative

The PIN is encrypted before storage in shared_prefs. This sounds like a security measure. It is not.

The encrypted PIN values, stored as PinEnc and PinIV, are not cryptographically tied to the identity vault that holds the user’s actual verification credentials. This means that if you delete PinEnc and PinIVfrom the configuration file and restart the app, you can set a new PIN. The app then presents credentials from the old verified identity profile as valid under the new PIN you just created.

The encryption is what security practitioners call “security theater.” It adds the appearance of protection without adding actual protection. A PIN that is encrypted but not bound to the data it protects might as well not be encrypted. The correct approach is cryptographic binding: the PIN-derived key should be used to encrypt the identity vault itself, so that changing or removing the PIN without the original value renders the vault inaccessible. This is standard key derivation architecture. It is taught in undergraduate cryptography courses.

Layer 3: Rate Limiting as an Editable Integer

The app implements rate limiting to prevent brute-force PIN guessing. The rate limit counter is stored as a simple integer in the same shared_prefs file.

Reset it to zero and the app forgets how many guesses have already been made. The rate limit resets on every edit of the configuration file. This is the equivalent of a combination lock where the lockout counter is written on a sticky note attached to the lock.

Effective rate limiting for authentication systems requires server-side state, hardware attestation, or at minimum a counter stored in tamper-resistant hardware storage. Storing it in an editable flat file is a known anti-pattern so basic that OWASP’s Mobile Application Security Testing Guide lists it as a disqualifying flaw in security assessments.

Layer 4: Biometric Authentication as a Boolean Flag

The UseBiometricAuth value in the same configuration file is a boolean. Set it to false and the app skips biometric verification entirely.

Biometric authentication is supposed to be a security layer that cannot be bypassed by an attacker who has physical access to the device. Storing the “is biometrics required” flag in the same editable file as every other security control means an attacker can disable the strongest authentication factor the app offers with a single character change.

The correct approach is to make biometric requirements enforced at the cryptographic level. If the identity vault’s decryption key is stored in the Keystore with a USER_AUTHENTICATION_REQUIRED constraint tied to biometric confirmation, the vault cannot be opened without biometric authentication regardless of what any configuration file says.

Layer 5: The Issuer Cannot Verify Passport Verification Occurred

This is the deepest flaw, the one identified in a March 2026 security analysis of the open-source codebase, and the one that undermines the entire trust model.

The app’s issuer component, the part of the system that creates and signs age credentials, has no way to verify that passport verification actually occurred on the user’s device. It issues a signed credential based on a claim, not based on verified evidence. A relying party (a website checking age credentials) receives a signed certificate but cannot know whether that certificate was generated after genuine passport verification or after some other process that the issuer erroneously accepted.

The researchers who found this flaw noted the trap embedded in fixing it: to verify that passport verification occurred, the issuer would need to receive cryptographic proof from the device, and the most direct form of that proof would involve sending passport data, including the user’s name and document number, to the server. That would fundamentally contradict the app’s privacy promise of zero data transmission.

This is not a bug. It is a fundamental design tension between privacy preservation and verification integrity that was apparently not resolved during development.

The Browser Extension Bypass

Paul Moore went a step further. He recreated the app’s credential generation logic using a browser extension and used it to generate fake verification responses that platforms would accept as valid proof-of-age. This means the bypass is not limited to users with rooted devices who can edit shared_prefs. A sufficiently motivated developer can generate valid-looking age credentials entirely outside the app.

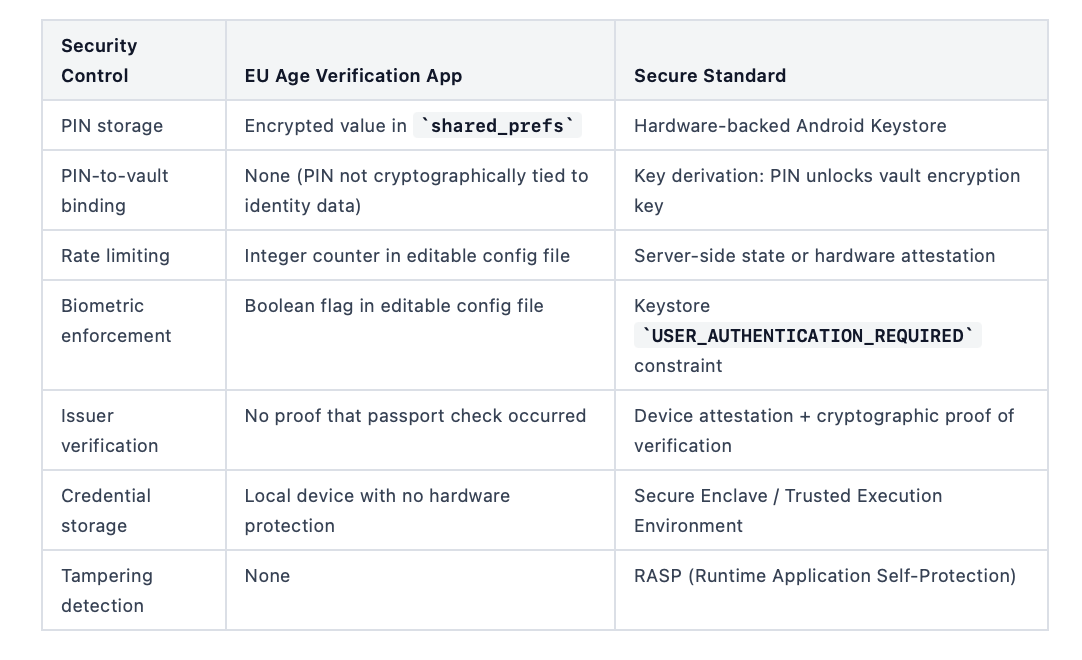

Here is the comparison table that summarizes where the app’s implementation sits against established secure mobile authentication standards:

Every item in the left column represents a deliberate design choice, not an accident. Someone approved this architecture.

Why Startups Would Have Caught This in Week One

At CADChain, before we ship any feature that touches user credentials or identity data, we run it through a standard security review process. This is not unusual. Any startup operating in a regulated space with real liability on the line does the same.

That process would have flagged every one of these issues before the first line of production code was written. Here is how.

Threat modeling catches shared_prefs in the design phase. When you map out attack surfaces for a mobile authentication system, “attacker with physical device access” is one of the first threat actors you model. For that threat actor, any security control stored in an editable file is no control at all. This elimination would have happened in the first threat modeling session, before the architecture was finalized.

Code review catches the rate-limiting integer. Any security-focused code review that checks authentication controls would flag a rate-limiting counter stored in the same mutable file as the credentials it protects. This is a textbook example taught in code review training.

Penetration testing catches the boolean bypass. A standard mobile pen test includes attempts to manipulate local configuration files. The UseBiometricAuth boolean would have been found in the first automated scan, let alone a manual review.

The issuer verification gap would have been caught in architecture review. Before implementation begins, a security architecture review examines the trust chain end-to-end. The question “how does the issuer know passport verification actually occurred?” would have been asked, and the absence of an answer would have blocked the design from moving forward.

The EU contracted T-Systems, one of Europe’s largest IT service companies, and Scytales, a digital identity specialist, for this project. The combined organizational capability to identify these issues exists. The question is whether the procurement process, the timeline pressure, and the political urgency around the April 15 launch date created conditions where known risks were accepted rather than resolved.

A startup with real revenue on the line cannot accept those risks, because they come straight out of the founder’s pocket and the company’s reputation. Government contracts work differently.

The Data Breach Context Brussels Ignored

The EU age verification app was designed while European data breach statistics were at historic highs. The people who approved its architecture had access to the same public data we do.

European data protection authorities recorded 443 breach notifications per day in 2025, a 22% increase from the previous year and the first time the daily average exceeded 400 since GDPR came into force in 2018. Total GDPR fines across Europe in 2025 reached approximately €1.2 billion, bringing the cumulative total since 2018 to over €7.1 billion.

The breach that should have been most instructive for the age verification team was the Cegedim healthcare database breach. In March 2026, it emerged that 15.8 million patient records had been stolen from Cegedim, one of the largest healthcare data breaches in European history, with 165,000 files containing doctors’ free-text notes including HIV status, psychiatric diagnoses, and sexual orientation. The French data regulator CNIL had already fined Cegedim €800,000 in September 2024 for illegally processing this exact category of sensitive health data. The fine produced no meaningful architectural change. The breach followed four months later.

The pattern is consistent. Europe has an enforcement framework that issues large fines. Those fines do not reliably produce secure systems. The Cegedim case, the 2025 TikTok fine of €530 million for illegal data transfers, the Meta €200 million fine for “consent or pay” GDPR violations: these are regulatory actions against organizations that made deliberate design choices the regulators later deemed non-compliant.

The EU age verification app made design choices that the security community identified as non-compliant with secure mobile development standards within hours of the code being publicly accessible. The pattern holds.

What makes this particularly consequential is scale. The age verification app is being built as infrastructure for the European Digital Identity Wallets planned for rollout across all 27 member states by end of 2026, covering roughly 450 million people. A security flaw in a single startup’s authentication system might expose tens of thousands of users. A structural flaw in the identity infrastructure underpinning the EU digital identity ecosystem is a different category of risk entirely.

Security researchers warned about exactly this. Over 400 security researchers signed an open letter warning that centralized identity verification systems create honeypots vulnerable to cyberattack. The Cegedim breach demonstrates what happens to centralized repositories of sensitive EU citizen data when the architecture is not built to withstand sophisticated attackers.

The Questions Nobody Is Answering: Expiry, Limits, and Mission Creep

This is the section where the technical analysis becomes something harder to dismiss.

When the app launched, developers on X started noticing details that do not fit cleanly into the stated purpose of simple age verification. The Collective Sensemaking Project account posted a pointed observation alongside the Paul Moore thread: “Apart from the things you highlighted, why do users only have a certain number of age verifications available? Why does proof of age have an expiration date? Once I’m over 18, I will always be over 18. I’m not turning any younger.”

These are not rhetorical questions. They are architectural questions with real answers, and those answers deserve scrutiny.

Why do age credentials expire?

Age is not a time-limited attribute. A 30-year-old does not become 17 again after 30 days. The only technical reasons to build expiry into an age credential are: to force periodic re-engagement with the verification system, to enable revocation of credentials (which implies a revocation authority), or to build in a mechanism that requires users to re-authenticate at regular intervals.

None of these reasons are related to protecting children from age-restricted content. All of them are related to maintaining an ongoing, refreshable relationship between the user and the verification infrastructure.

Why is there a limit on how many times a user can verify their age?

A verification limit makes no sense in the context of child protection. A parent who wants to verify their adult status to access age-restricted content should be able to do so as many times as they use age-restricted services. A fixed verification count either creates friction that has no safety justification, or it creates a mechanic that requires periodic re-credentialing, which again maintains an ongoing relationship with the system.

Why is the app built on the same technical specifications as the EUDI Wallet?

The Commission is explicit about this. The age verification app “is built on the same technical specifications as the European Digital Identity Wallets, ensuring compatibility and future integration.” The stated reason is efficiency and interoperability. The unstated implication is that the age verification infrastructure is not a standalone tool. It is an onramp.

European Digital Rights (EDRi) described age verification as a “sledgehammer approach” that increases the risk of data breaches and surveillance, noting that even tools claiming anonymity could fail due to metadata leaks or implementation flaws. EDRi specifically flagged concerns about “mission creep,” the gradual expansion of verification infrastructure beyond its stated purpose once the technical capability exists.

This is not a novel concern in technology policy. The EU’s COVID digital certificate was introduced as a temporary public health measure. Von der Leyen explicitly invoked it as the model for the age verification app. The COVID certificate became the template for digital identity infrastructure in 78 countries, according to the Commission’s own press conference.

The question of what the age verification infrastructure becomes after it normalizes is not a conspiracy. It is a product roadmap question, and the answer is sitting in the Commission’s own published materials about the EUDI Wallet.

The 30-day re-verification pattern

Reports from developers testing the app noted credential validity periods that require users to re-verify their age at regular intervals. The specific interval has not been officially confirmed at the time of writing, but the presence of any expiry date on a credential attesting to a permanent biological fact is a design choice that warrants a clear public justification.

When CADChain designs IP protection credentials for 3D design files, the immutability of the timestamp is the point. A file registered on March 12, 2024 carries that timestamp permanently. A credential that certifies a piece of digital information and then expires is certifying something other than a permanent fact. In the case of age verification, the expiring credential is not certifying age. It is certifying a recent re-authentication event. Those are different things, and conflating them matters.

What Secure Identity Architecture Actually Looks Like

For context, here is how the security principles the EU app claims to implement are correctly applied in production systems.

Zero-knowledge proofs done right

The app is supposed to use zero-knowledge proofs (ZKP) to allow users to prove they are over 18 without revealing their date of birth. This is a sound cryptographic approach. ZKP systems like zk-SNARKs allow a prover to demonstrate knowledge of a secret (such as a birth date satisfying an age condition) without revealing the secret itself.

The problem is that ZKP at the credential level does not help if the credential issuance process is broken. If an attacker can bypass the PIN and biometric controls to access another user’s credential and then generate a ZKP proof from that credential, the ZKP layer provides privacy but not security. You are proving something true about a credential that was issued fraudulently.

The Digital Credentials API and OID4VP

The app uses the W3C Digital Credentials API with OID4VP (OpenID for Verifiable Presentations) as a fallback. These are legitimate open standards for presenting verifiable credentials to relying parties. The standards themselves are sound. The issue is that the credential issuance and storage layer, which these standards rely on for security, is where the app fails.

What the Android Keystore actually provides

The Android Keystore system generates and stores cryptographic keys in hardware-backed secure storage. Keys can be constrained so that they are only usable after biometric authentication (USER_AUTHENTICATION_REQUIRED), only on the specific device where they were generated (DEVICE_ONLY), and only by the specific app that created them. These constraints are enforced by the device’s Trusted Execution Environment. They cannot be bypassed by editing configuration files, by gaining root access, or by any software-level attack that does not also compromise the device’s TEE.

Using the Keystore correctly, the EU app could have ensured that:

- The identity vault is encrypted with a key that only unlocks after biometric authentication

- The key is hardware-bound and cannot be exported or transferred to another device

- Rate limiting is enforced at the hardware attestation level rather than in a config file

- Any tampering with the app’s data directory triggers key invalidation

None of this requires exotic technology. It requires using the security features that Android provides, following the documentation Google publishes for exactly this use case.

The Startup Security Checklist the EU Skipped

Here is what a security-conscious startup would have run through before shipping an identity application to production. This is adapted from the review process we apply at CADChain when building credential systems.

Pre-Architecture

- Define the complete threat model including physical device access, network interception, malicious apps, and server-side compromise

- Map every security control to the threat it mitigates and verify the control actually addresses that threat

- Identify all data classified as special category under GDPR Article 9 and ensure no design decision permits exposure of that data without explicit legal basis

Architecture Review

- Verify no security-critical state (authentication flags, rate limit counters, biometric requirements) is stored in user-accessible or editable storage

- Confirm PIN or password-derived keys are used to encrypt protected data, not merely stored alongside it

- Validate that the issuer-verifier trust chain includes cryptographic proof of verification events, not just claims

Implementation Review

- Audit all use of SharedPreferences or equivalent storage systems; confirm no authentication state is stored there

- Verify Keystore usage with appropriate constraints for all credential and authentication key material

- Confirm biometric bypass is architecturally impossible, not just policy-prohibited

Penetration Testing

- Rooted device testing: attempt to read and modify all application data directories

- Configuration manipulation testing: attempt to change boolean flags and counter values

- Credential replay testing: confirm credentials cannot be replayed after PIN reset or biometric bypass

- Browser extension / external credential generation: attempt to generate credentials outside the app that relying parties would accept

Third-Party Security Audit

- Independent security audit by a firm with no relationship to the development contractor

- Full disclosure of findings before production launch

- Public bug bounty program post-launch with defined scope covering authentication bypass and credential forgery

The EU app was audited for GDPR compliance. It was not audited for security by an independent party before launch. Those are different things.

FAQ: EU Age Verification App Security for Technical Readers

What specific cryptographic failures does the EU age verification app contain?

The primary cryptographic failure is the absence of binding between the PIN-derived key material and the identity credentials the PIN is supposed to protect. Standard practice for PIN-protected credential stores is to use the PIN to derive a symmetric key (via a KDF like PBKDF2 or Argon2) and use that key to encrypt the credential vault. Without the correct PIN, the vault cannot be decrypted. The EU app stores encrypted PIN values alongside the credential vault in the same editable storage, without any cryptographic link between them. An attacker can delete the PIN values, create a new PIN, and access the old vault because the vault’s encryption (if any) is not dependent on the original PIN.

Why is storing authentication controls in SharedPreferences such a serious flaw?

Android’s SharedPreferences is a lightweight XML-based key-value store in the app’s internal storage directory. While access is restricted by Linux file permissions at the OS level, this protection fails under several common attack conditions: rooted devices, physical device extraction with forensic tools, ADB access with developer mode enabled, and malicious apps with elevated permissions. Security-sensitive data like authentication flags, rate-limiting counters, and cryptographic material should reside in Android Keystore, which stores keys in hardware-backed secure storage enforced by the Trusted Execution Environment. TEE-backed storage cannot be extracted or modified by software-level attacks, rooting, or physical access to the device’s filesystem.

What is the EUDI Wallet and how does it relate to the age verification app’s security concerns?

The European Digital Identity Wallet (EUDI Wallet) is a mandated EU-wide digital identity system each member state must make available to citizens by end of 2026 under the eIDAS 2.0 regulation. The age verification app is explicitly built on the same technical specifications as the EUDI Wallet and is designed to be integrated into it. This means the app is not a standalone child safety tool but a component of the future EU digital identity infrastructure. Security flaws in the app’s architecture are therefore relevant not just to age verification but to the security model of the broader EU digital identity system, which will eventually handle government credentials, identity attestations, and potentially other verifiable attributes for hundreds of millions of European citizens.

What does the credential expiry date in the app suggest architecturally?

Age is a permanent attribute. A person who is 30 today will not be younger tomorrow. There is no security justification for an age credential to expire if the purpose is purely to verify that a user is above a threshold age. Credential expiry in identity systems typically serves one of three purposes: forcing re-authentication (which implies ongoing system engagement), enabling revocation (which implies a revocation authority with a list of invalidated credentials), or limiting the validity window for freshness (which implies the attribute being attested is time-sensitive). None of these purposes apply to a static age threshold. The presence of expiry dates and verification limits in the app suggests the system is designed to maintain a recurring relationship between user and infrastructure, not to perform a one-time age check.

How does the issuer verification gap undermine zero-knowledge proof claims?

Zero-knowledge proofs at the presentation layer (proving age to a website without revealing birth date) provide privacy for the credential holder. They do not provide security at the issuance layer. If the issuer component cannot verify that passport verification actually occurred on the user’s device before issuing a signed credential, then a malicious actor can potentially trigger credential issuance without completing genuine passport verification. The signed credential is then cryptographically valid (it carries a real issuer signature) but was generated without actual identity verification. A ZKP proof derived from this credential proves the credential exists and satisfies the age condition; it does not prove the credential was issued legitimately. The privacy guarantee holds; the security guarantee does not.

What would a properly designed mobile age verification system look like?

A secure mobile age verification system would use the device’s hardware-backed Keystore to store credential key material. The credential vault would be encrypted with a key that requires biometric authentication to access, enforced at the hardware level. The rate limiting for PIN attempts would be implemented via Keystore-backed failed attempt counters, which the hardware enforces and which cannot be reset by editing files. The issuer would require cryptographic proof of verification events (for example, a device attestation certificate proving the passport was read by the app on the claimed device) before issuing credentials. Credentials would be bound to the specific device’s hardware identity. Biometric bypass would be architecturally impossible, not merely configuration-prohibited. An independent security audit would be completed and findings disclosed before public launch.

How does the EU age verification app compare to existing commercial age verification solutions?

Several commercial age verification providers in Europe have built systems using hardware attestation, on-device biometric matching without data transmission, and ZKP credential presentation. These systems vary in their privacy-utility tradeoffs, but the better ones avoid the shared_prefs storage model entirely, use Keystore-backed credential storage, and tie biometric requirements to key access constraints rather than configuration flags. The EU app’s architecture is not state of the art for commercial age verification. It resembles an early prototype more than a production security system.

What does the browser extension bypass reveal about the credential verification system?

Paul Moore recreated the app’s credential generation logic in a browser extension and used it to generate age credentials that platforms accepted as valid. This means the credential format and verification protocol used by the app is either insufficiently tied to device identity or insufficiently tied to the issuance process. A secure credential system would incorporate device attestation (proof that the credential was generated on a specific legitimate device) and issuer-binding (proof that the credential was generated by the authorized issuer after completing the required verification steps). Without these, any developer who understands the credential format can generate credentials without using the app at all.

What are the NIS2 implications for platforms that rely on the EU age verification app?

The EU’s NIS2 Directive, which came into effect in October 2024, imposes cybersecurity requirements on organizations operating in certain sectors and on digital infrastructure providers. Platforms that integrate the EU age verification app as a security control and experience a security incident related to its known vulnerabilities may face scrutiny over whether they exercised adequate due diligence in their security risk assessment. Relying on a third-party security control with publicly disclosed unresolved vulnerabilities, without additional compensating controls, could be viewed unfavorably by NIS2 supervisory authorities. Organizations integrating the app should document their risk assessment, including awareness of the disclosed vulnerabilities, and implement compensating controls where possible.

What is the correct way for a startup to approach EU age verification compliance given the app’s security issues?

Given the publicly disclosed security issues, startups should not rely on the EU age verification app as their sole compliance mechanism for DSA Article 28. Instead, evaluate third-party age verification providers that have undergone independent security audits and can demonstrate compliance with the DSA guidelines on technical robustness and data minimization. Design your verification architecture to be compatible with the EUDI Wallet credential format (W3C Digital Credentials API, OID4VP) so you can switch to wallet-based verification when it becomes available. Conduct your own DPIA under GDPR Article 35, document your risk assessment including awareness of the EU app’s vulnerabilities, and maintain records of the compensating controls you implement. Do not implement facial biometric age estimation without a full Article 9 GDPR analysis, as this constitutes special category data processing.

Conclusion: What This Tells Us About Who Should Build Security Infrastructure

I want to be direct about what this failure represents.

The EU age verification app was not built by incompetent people. T-Systems is a major IT services company. Scytales specializes in digital identity. The problem is not capability. The problem is incentive structure and accountability.

When a startup ships a product with authentication vulnerabilities of this severity, the consequences are direct and personal: breached users, legal liability, regulatory fines, loss of customers, and in many cases, company failure. The feedback loop between security decision and security consequence is short and unforgiving.

When a government-contracted team ships a product with authentication vulnerabilities of this severity, the contract has already been paid, the announcement press conference has already been held, and the political narrative around protecting children is already in circulation. The feedback loop is slower, more diffuse, and mediated by procurement procedures and political communication strategies.

As of April 17, 2026, the European Commission has not released an official fix or response to the vulnerabilities. The app launched two days ago.

At CADChain, we secure intellectual property for 3D design files using cryptographic timestamping and blockchain anchoring. Our users trust us with the evidence that proves they created something first. We cannot afford to have that evidence be forgeable or bypassable. The threat model is real, the stakes are real, and the architecture has to match.

Europe deserves identity infrastructure built to the same standard. Right now, it got something that a motivated teenager with 20 minutes and a text editor can bypass.

The question of whether to trust the EUDI Wallet when it arrives in your member state by end of 2026, built on the same architectural foundations as this app, is a question every European startup and every European citizen should be actively asking.